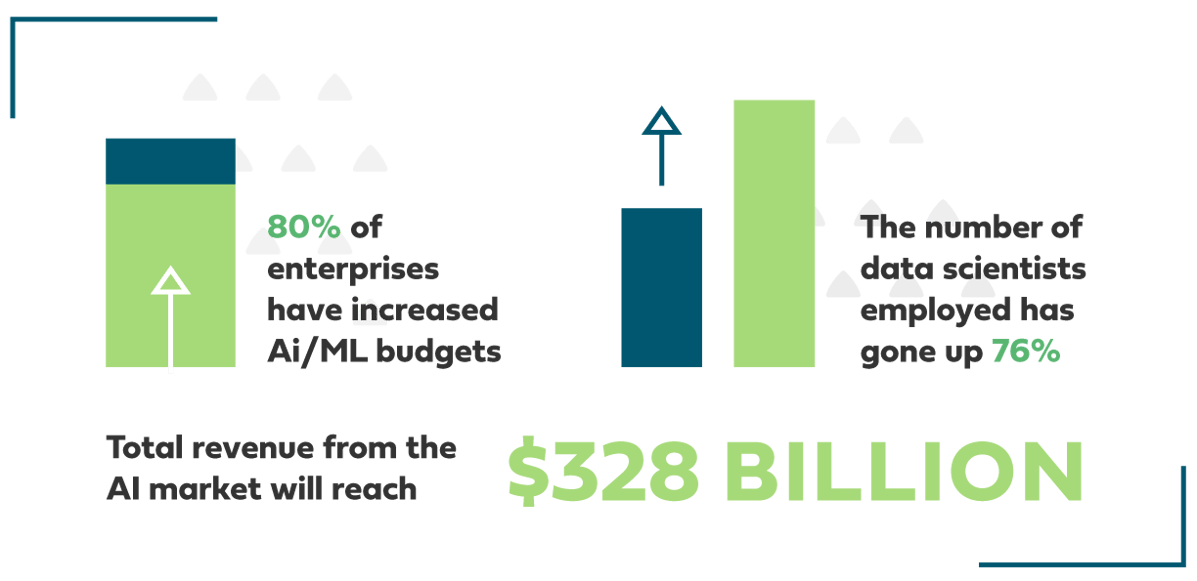

According to a study from Algorithmia, more than 80% of enterprises have increased their artificial intelligence (AI)/machine learning (ML) budgets over the past year.

Not only that, the number of data scientists employed by organizations has gone up by 76%.

These increases in budget and hiring help explain why IDC projects that total revenue from the AI market (hardware, software, services) will reach $328 billion this year.

As impressive as these numbers are, they come with a downside. Namely, this new investment isn’t yet translating into AI/ML models reaching production faster.

In fact, that same Algorithmia study found that 64% of organizations have seen their time to take a model from data science to production increase despite increased investment.

That’s a problem, and it highlights the very real challenges enterprises encounter when trying to scale their AI/ML efforts. These challenges include:

- Data availability and quality of data to support AI/ML

- Ensuring proper governance of data, especially when it comes to government regulations like GDPR

- Addressing unfairness and bias that often creep into AI/ML models

At best, these and other challenges greatly slow down an organization’s AI/ML efforts. At worst, they can lead to outright project failures, creating sunk costs that can’t be recovered.

So how can your organization avoid these pitfalls? What are the steps you can take to navigate the complexities of capturing and governing the vast amount of data necessary to power AI/ML?

1. Walk before you run

Like any relatively new technology, adopting AI/ML is a process.

Before you start your first model, assess your organization’s capabilities when it comes to adopting new technologies, along with how capable you currently are in governing your data. Ask yourself:

- Do you have processes already in place for the sound governance of data?

- If so, can they be easily adapted for AI/ML?

- If not, how will you create workflows that ensure governance without running afoul of regulations or user privacy?

After you’ve answered these questions, your initial AI/ML models should be small in size but with measurable results. Don’t try to take on too much too early, but rather, invest incrementally based on your progress.

2. Scale deliberately

Taking your first AI/ML model into production is exciting. Even something relatively low-hanging like deploying a chatbot on your website is worth taking a victory lap.

Before you go all-in with your investment in AI/ML, though, it’s critical that you recognize that success is difficult to replicate at scale.

The more models you attempt to build and deploy, the more people—and workflows—you will need to bring on board. You also need to scale the technology itself, which means more data that needs to be stored, more compute to pay for, more people requiring access to potentially sensitive data.

3. Keep an eye on your costs

The public cloud promises seemingly endless capacity. This is critical for managing the vast amount of unstructured data that powers AI/ML.

At some point, though, your success can actually become a detriment. The cloud is cheap … until it isn’t, and it’s easy to lose sight of just how much storage and compute you’re using on your AI/ML projects.

To avoid this, you should anticipate the need to adopt a hybrid cloud model as your AI/ML operations scale. That way, you will still be able to utilize the scale and flexibility of the cloud, while controlling the more expensive aspects of building and deploying models on-premises.

Another area to keep an eye on is regulatory compliance. Navigating GDPR, in particular, can easily take more time and resources than you expect, especially when you’re forced to obscure or scrub data for privacy reasons at scale.

Similar to compliance, you need to be proactive with your plans for dealing with unfairness or bias. AI/ML are complicated to build and even harder to fully understand by the outside public.

A model that assesses credit ratings, for example, can appear to be unfair to segments of society due to a number of factors that aren’t readily apparent. To avoid such scenarios, you need to invest in oversight of your AI/ML efforts—an oversight that is focused on explaining (and defending) your models if need be.

4. Get help if (and when) you need it

Of course, not every organization is able or willing to invest heavily to get their AI/ML operations up and running.

In that case, working with a third party like Redapt can help you bridge the gaps blocking you from successfully adopting and scaling AI/ML. We can:

- Help you understand the costs from the beginning of your adoption process

- Provide you with recommendations on how to scale affordably

- Create rigorous guidelines for governance and combating bias

- Rapidly take you from zero to multiple models in production with our ML Accelerator program

To learn much more about adopting AI/ML, check out our free deep dive into advanced analytics. And for help kick-starting your own capabilities, reach out to one of our experts.