In today's data-driven world, enterprises deal with vast amounts of data from various sources and formats. Efficiently integrating, processing, and managing this data is critical to achieving meaningful insights and making informed decisions. Azure Data Factory (ADF), a cloud-based data integration service, collaborates seamlessly with Snowflake, a powerful cloud data platform, to offer a robust data integration and analytics solution. In this blog, we will explore how Azure Data Factory works with Snowflake, unlocking the full potential of data processing and utilization.

Understanding Azure Data Factory and Snowflake Integration

One of the strengths of Azure Data Factory is its ability to act as the bridge between different data sources, processing platforms, and storage solutions, enabling data movement, transformation, and orchestration. On the other hand, Snowflake is known for its cloud-native data warehousing capabilities, offering scalable and high-performance data storage and processing.

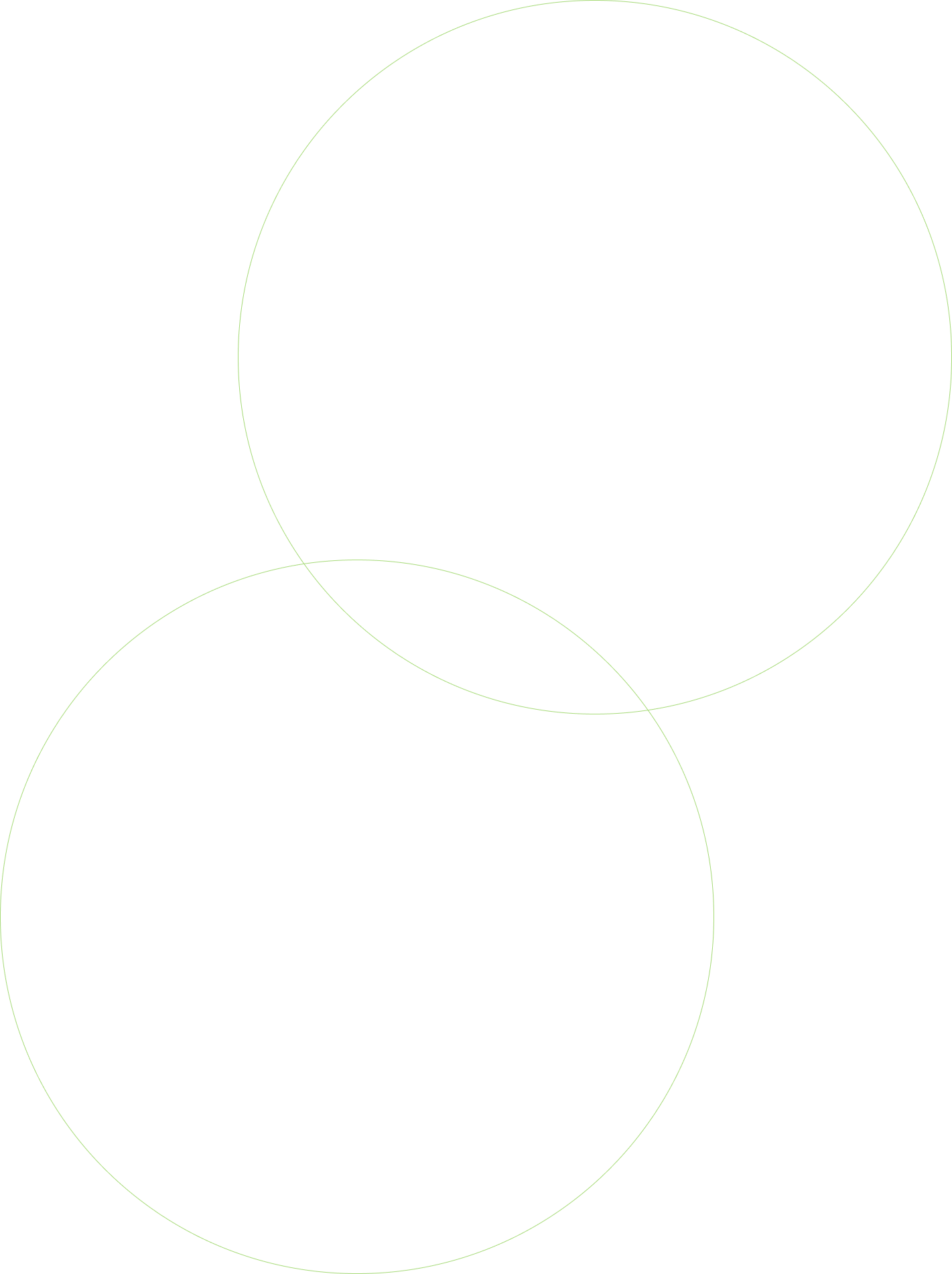

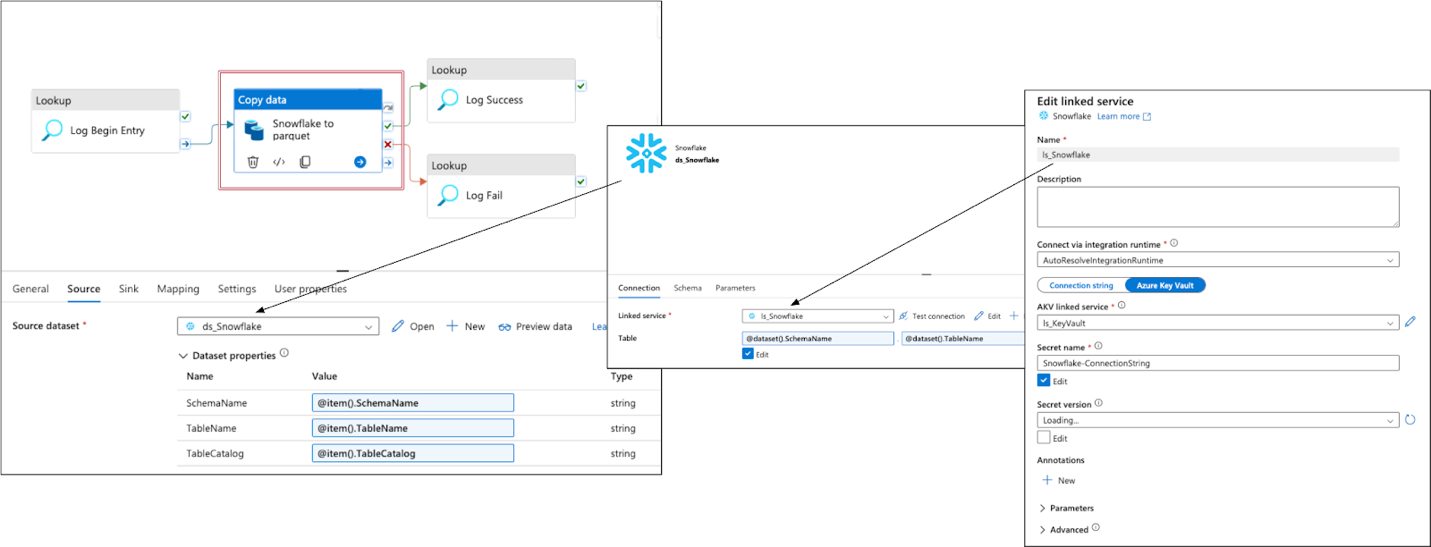

In this example, an Azure Data Factory (ADF) pipeline is utilized to transfer data from Snowflake to a parquet file residing in Azure Data Lake Storage (ADLS):

The Azure Data Factory (ADF) pipeline performs a data copy operation, moving data from Snowflake to a parquet file in Azure Data Lake Storage (ADLS). The pipeline takes in a parameter set consisting of "SchemaName," "TableName," and "TableCatalog," which helps specify the source data in Snowflake.

To ensure security, the pipeline utilizes an ADF linked service named "ls_Snowflake," which holds a Snowflake connection string securely stored in Azure Key Vault. This connection string facilitates the interaction between ADF and Snowflake, ensuring sensitive credentials are not exposed.

The Azure Data Factory (ADF) pipeline copies data from Snowflake to a parquet file stored in Azure Data Lake Storage (ADLS Gen2) using a dataset named "ds_parquet." The pipeline leverages an ADF Linked Service named "ls_adls" with Azure System Managed Identity for secure connectivity to the ADLS storage bucket. Data is transformed and converted into the efficient parquet file format during the copy process. The resulting data is stored in ADLS Gen2, ensuring a scalable and optimized storage solution for big data workloads.

Overall, the ADF pipeline provides a reliable and secure solution for transferring data from Snowflake to ADLS in the form of parquet files, enabling efficient data integration and storage in the Azure environment.

Integration Advantages

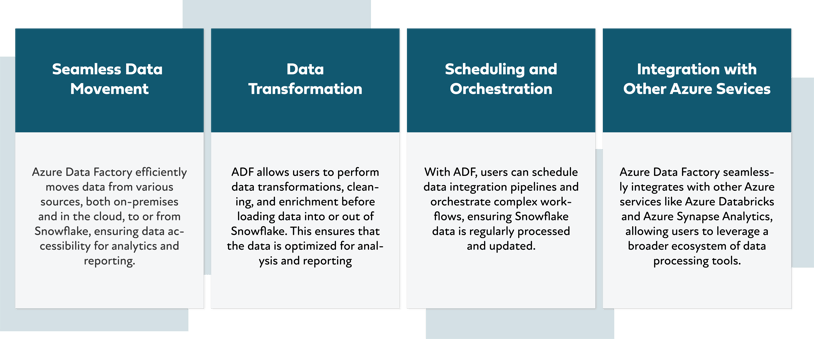

The collaboration between Azure Data Factory and Snowflake provides several advantages for data-driven organizations, such as seamless data movement, data transformation, scheduling and orchestration, and integration with other Azure services.

Benefits of a cost-effective Azure Data Factory and Snowflake integration include scalability and performance, data availability, and flexibility and versatility.

- Cost-effective model: ADF's serverless architecture and pay-as-you-go pricing model enable organizations to optimize costs based on their data processing needs.

- Scalability and performance: Snowflake's scalable architecture, combined with ADF's ability to handle large-scale data movement and processing, ensures optimal performance.

- Data availability: The seamless integration ensures that an organization's data is readily available for analytical purposes, enabling faster insights and decision-making.

- Flexibility and versatility: ADF's support for various data sources and destinations and Snowflake's compatibility with different analytics tools provide data processing and usage flexibility.

By leveraging the strengths of Azure Data Factory and Snowflake, businesses can unlock the true potential of their data and gain valuable insights for improved decision-making. Whether it is seamless data movement, flexible data processing, or cost-effective scalability, the Azure Data Factory and Snowflake integration provides a comprehensive data solution for modern data-driven enterprises.