Big things come in small packages, as they say, and when it comes to speeding up the process of developing and deploying applications, few tools are as powerful (or as easily overlooked) as Kubernetes pods.

The smallest deployable units of computing in Kubernetes, pods are essentially made up of containers that share storage and network resources.

The most common usage of pods is the “one-container-per-pod” model, where Kubernetes manages a single container in the pod. But pods can also be used to house an application that relies upon co-located containers sharing resources to make a single function happen.

What makes a pod powerful

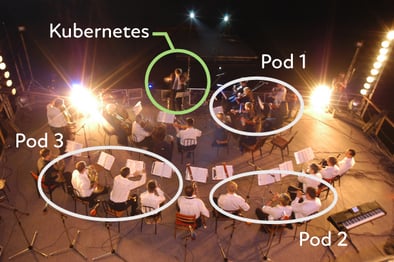

If you were to think of modern application development as an orchestral performance, then containers would be the musicians, pods would be the instrument sections (strings, brass, etc.), and Kubernetes would be the conductor.

Similar to how instrument sections work in unison to create a much fuller and richer sound, pods make it possible for containers to work together. This is done by simplifying the communication and data sharing between pods.

Read More: How to Orchestrate and Monitor Containers and Microservices

Under the hood of pods are a unique IP address, which makes it easier and much more efficient for the pods to find each other within Kubernetes in order to orchestrate their actions. Pods also contain configuration details for how a container should run, and if needed, persistent storage volumes.

Together, these components greatly streamline communication between containers and a streamlined communication leads to less complexity for the developers to worry about.

Another element that makes pods powerful is their short lifespan. Once you create a pod (or a controller does), it’s given a schedule to run on a node in a cluster. Once the pod is no longer needed, it’s automatically kicked out of the node and deleted.

This helps ensure resources are being used efficiently and, when combined with the ability of controllers to replicate pods, allows for applications to easily scale horizontally. It also makes critical tools like application auto-healing possible.

Finally, pods (by virtue of containers) are portable and run the same way in any Kubernetes cluster. This is key to making a hybrid cloud achievable. By running Kubernetes everywhere, the pods can be deployed and run the same way no matter if it’s on GCP, AWS, Azure, or your own datacenter servers.

Read More: The Best 4 Tools for Container Monitoring & Management

One of many powerful Kubernetes elements

Obviously, pods are nothing new to those already well-versed in containers and Kubernetes in general.

But for those just starting out with the increasingly prevalent model for application development, it’s worth understanding just how important, and mighty, pods can be.

Adopting new ways of developing products and services can be a challenge. Learn how you can alleviate key pain points like lack of talent & expertise, security requirements, and more by reading our in-depth guide to deploying managed Kubernetes on-premises.